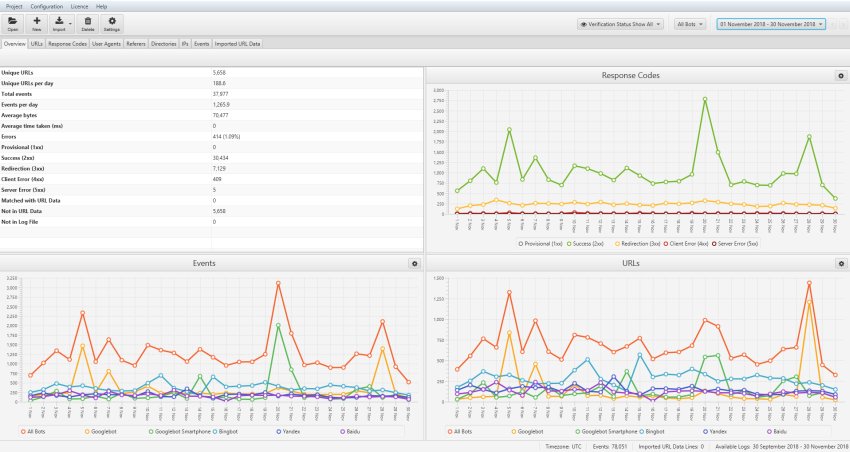

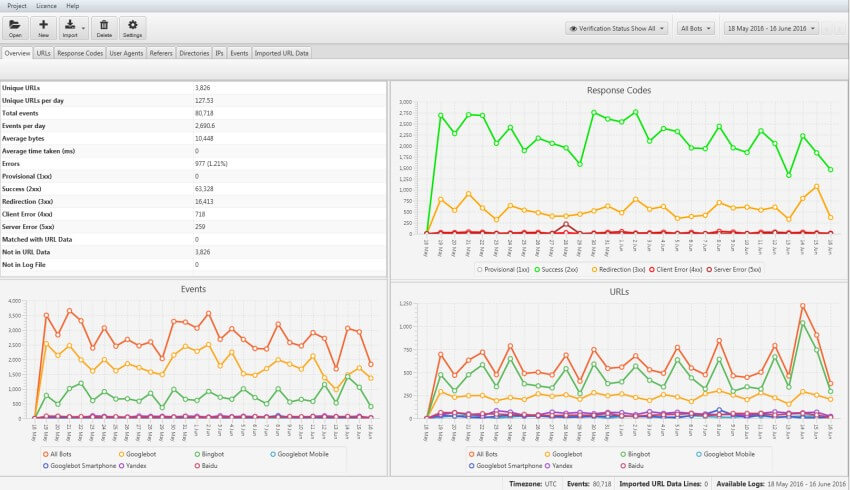

Google explain that “many low-value-add URLs can negatively affect a site’s crawling and indexing”. The Concept of Crawl Budgetīefore we go straight into the guide, it’s useful to have an understanding of crawl budget, which is essentially the number of URLs Google can, and wants to crawl for a site.īoth ‘crawl rate limit’, which is based on how quickly a site responds to requests, and ‘crawl demand’, the popularity of URLs, change frequency and Google’s tolerance to ‘staleness’ in their index, all have an impact on how much Googlebot will crawl. 5) Discover areas of crawl budget waste.Īlongside other data, such as a crawl, or external links, even greater insights can be discovered about search bot behaviour.

4) See which pages the search engines prioritise, and might consider the most important.3) Identify crawl shortcomings, that might have wider site-based implications (such as hierarchy, or internal link structure).2) View responses encountered by the search engines during their crawl.1) Validate exactly what can, or can’t be crawled.Log file analysis can broadly help you perform the following 5 things – This is why it’s vital for SEOs to analyse log files, even if the raw access logs can be a pain to get from the client (and or, host, server, and development team). Logs remove the guesswork, and the data allows you to view exactly what’s happening. They allow you to see exactly what the search engines have experienced, over a period of time. Log Files are an incredibly powerful, yet underutilised way to gain valuable insights about how each search engine crawls your site.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed